AI can be fooled into making mistakes, sometimes risking lives, but quantum computing could provide a strong defence

Machine learning is a field of artificial intelligence (AI) where computer models become experts in various tasks by consuming large amounts of data. This is instead of a human explicitly programming this level of expertise.

For example, modern chess AIs do not need to be taught chess strategies by human grandmasters, but can ‘learn’ them independently by playing millions of games against copies of themselves.

This is invaluable in situations where writing down explicit instructions is impractical, if not impossible – how do you define a mathematical function that can tell you if a picture contains a cat or a dog?

Human children never learn any such function, but rather see many examples of cats and dogs, then eventually develop an understanding of their differences.

Machine learning is about replicating this process in computers

But despite their incredible successes and increasingly widespread deployment, machine learning-based frameworks remain highly susceptible to adversarial attacks – that is, malicious tampering with their data causing them to fail in surprising ways.

For example, image-classifying models (which analyse photos to identify and recognise a wide variety of criteria) can often be fooled by the addition of well-crafted alterations (known as perturbations) to their input images that are so small they are imperceptible to the human eye. And this can be exploited.

The continued vulnerability to attacks like these also raises serious questions about the safety of deploying machine learning neural networks in potentially life-threatening situations. This includes applications like self-driving cars, where the system could be confused into driving through an intersection by an innocuous piece of graffiti on a stop sign.

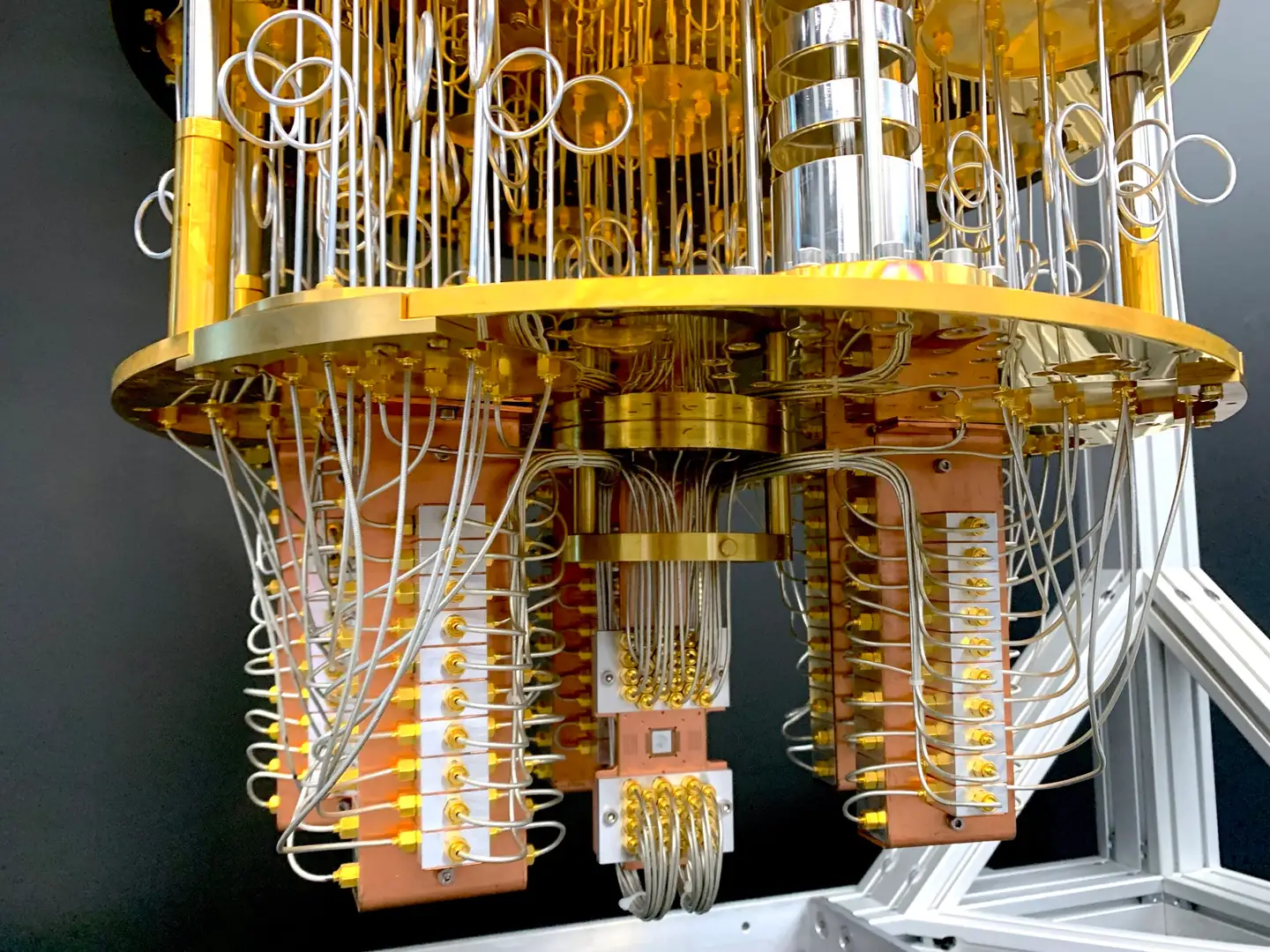

At a crucial time when the development and deployment of AI are rapidly evolving, our research team is looking at ways we can use quantum computing to protect AI from these vulnerabilities,